AWS Bedrock Knowledge Bases simplifies RAG

Amazon Bedrock Knowledge Bases helps teams build RAG apps with managed ingestion, retrieval, citations, and structured-data queries.

Amazon Bedrock Knowledge Bases is AWS’s managed RAG layer for grounding AI in private data.

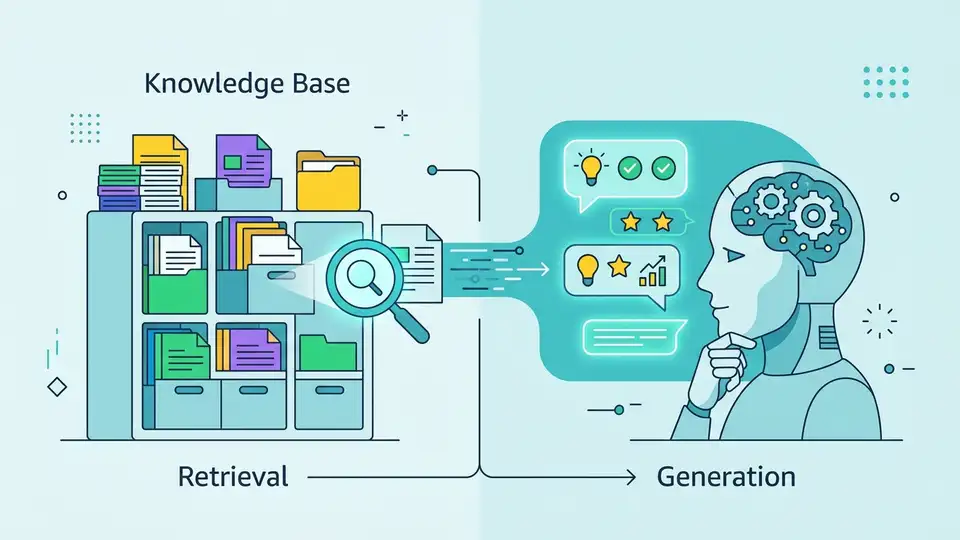

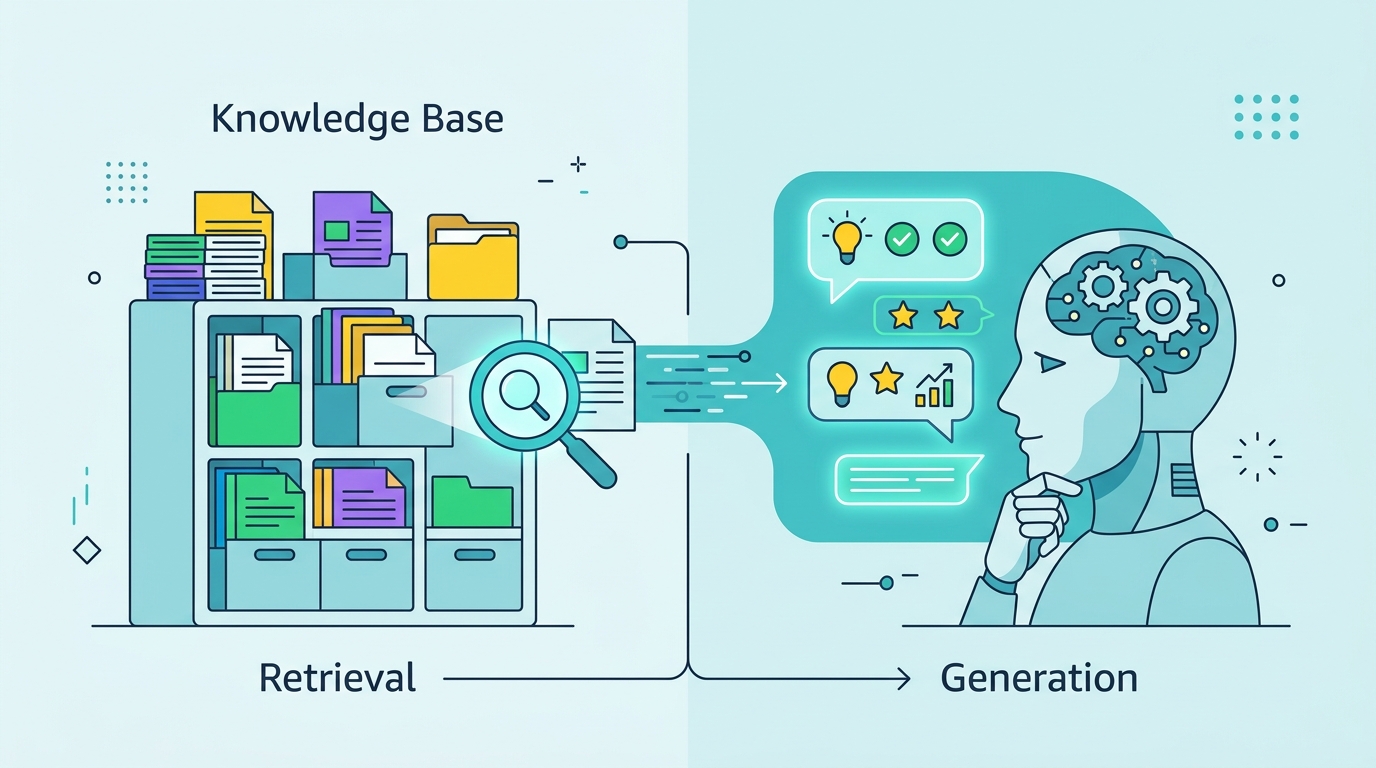

Amazon Web Services says Amazon Bedrock Knowledge Bases can connect foundation models to private company data without forcing teams to stitch together every piece of the retrieval stack themselves. The pitch is simple: ingest data, retrieve the right chunks, augment the prompt, and return answers with citations.

That matters because RAG projects often fail in the boring middle. Teams can usually get a prototype working, then spend weeks wiring up chunking, embeddings, vector storage, filters, and source tracking. AWS is trying to package those moving parts into one managed service.

| Capability | AWS claim | Why it matters |

|---|---|---|

| Workflow coverage | End-to-end RAG | Ingestion, retrieval, and prompt augmentation live in one service |

| Data sources | S3, Confluence, Salesforce, SharePoint, Web Crawler preview | Teams can connect common enterprise systems faster |

| Vector stores | Aurora, OpenSearch Serverless, Neptune Analytics, MongoDB, Pinecone, Redis Enterprise Cloud | Organizations can keep existing storage choices in play |

| Retrieval APIs | Retrieve and RetrieveAndGenerate | Developers can either inspect results or generate answers directly |

| Source attribution | Citations included | Users can see where answers came from |

What AWS is actually shipping

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

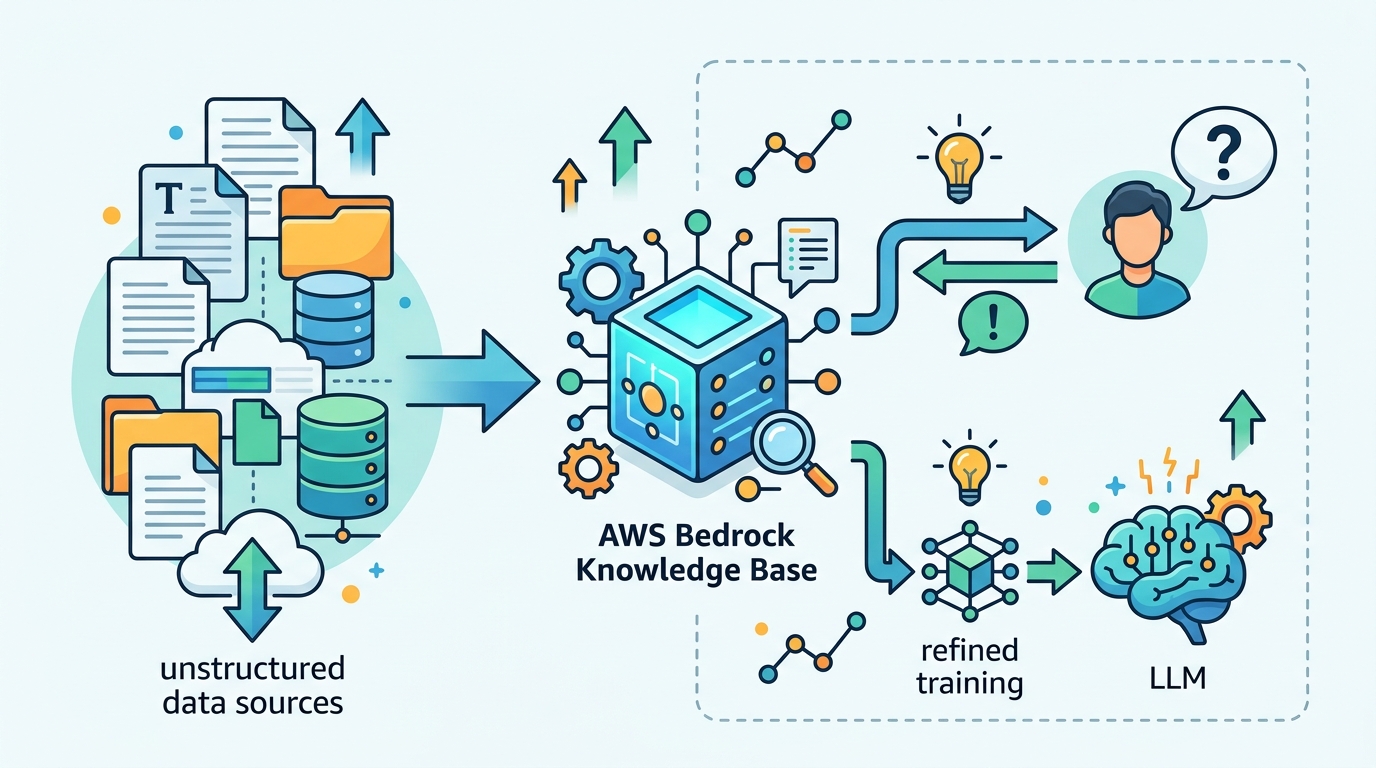

Amazon Bedrock Knowledge Bases is a managed RAG feature inside Amazon Bedrock. AWS says it can pull from unstructured sources such as Amazon S3, Confluence, Salesforce, and SharePoint, with Web Crawler in preview.

The service also supports programmatic document ingestion, which is the part that matters if your data arrives as a stream or lives in a system AWS does not support yet. Once ingested, the content gets split into text blocks, converted into embeddings, and stored in a vector database.

That vector layer is not locked to one vendor. AWS lists Amazon Aurora, Amazon OpenSearch Serverless, Amazon Neptune Analytics, MongoDB, Pinecone, and Redis Enterprise Cloud as supported options.

- Ingestion from common enterprise systems

- Embeddings stored in a chosen vector store

- Built-in retrieval and answer generation APIs

- Citations attached to retrieved content

Why the structured-data angle matters

One of the more useful details in AWS’s announcement is support for structured sources. Instead of copying warehouse or datalake data into another store, Bedrock Knowledge Bases can translate natural language into SQL and run the query against the source system.

That is a practical answer to a problem many teams hit quickly: unstructured search is only half the story. Real business questions often live in transactional tables, reporting warehouses, and other structured systems where a vector index alone is not enough.

AWS also says the service can answer questions and summarize a single document without requiring a vector database at all. For small or narrowly scoped use cases, that lowers the barrier to entry and avoids the extra setup that usually slows early experimentation.

How it handles messy enterprise content

AWS is also leaning into multimodal retrieval. The service can parse documents with tables, figures, charts, diagrams, images, audio, and video, then return those elements as part of retrieval.

For parsing, AWS says you can use Bedrock Data Automation or foundation models as the parser. That matters for contracts, slide decks, financial reports, and technical documentation where layout carries meaning.

The chunking options are more flexible than the usual “split on every N tokens” approach. AWS lists semantic chunking, hierarchical chunking, fixed-size chunking, and custom chunking through Lambda. It also says teams can plug in components from LangChain and LlamaIndex.

“Retrieval augmented generation is a way to help a language model generate answers using information from outside its training data,” said Rohit Prasad, senior vice president and head scientist for Amazon Bedrock.

What makes the retrieval layer different

Bedrock Knowledge Bases does more than return chunks. AWS says it can use reranker models to improve relevance across text, visual, and multimedia content, which is important because retrieval quality often matters more than model choice once the app is in production.

There is also a graph angle. If you choose Amazon Neptune Analytics as the vector store, AWS says the service can automatically create embeddings and graphs that connect related content across sources. It then uses those relationships with GraphRAG to improve retrieval accuracy and explainability.

- Retrieve API: returns relevant results for a query, including images, tables, audio, and video

- RetrieveAndGenerate API: uses retrieved results to augment the prompt and generate the response

- Filters: can be explicit or generated implicitly by the model

- Reranking: improves ranking across multiple content types

That combination matters because many retrieval systems are good at finding text and mediocre at finding the best answer. AWS is trying to reduce that gap by making retrieval, ranking, and attribution part of the same service instead of separate plumbing tasks.

What this means for teams building AI apps

The big tradeoff here is control versus speed. A custom RAG stack can be tuned in painful detail, but it also takes real engineering time to keep ingestion, retrieval, filters, and citations working as data changes.

Amazon Bedrock Knowledge Bases cuts down that setup work, especially for teams already building on AWS. It is most attractive when you want grounded answers from internal documents, warehouses, and enterprise apps without spending a sprint on infrastructure glue.

The flip side is dependency. Once your retrieval flow sits inside a managed service, you inherit AWS’s supported sources, parsers, and APIs. For many teams that is a good trade. For others, especially those with unusual data systems or strict portability requirements, a custom stack may still make more sense.

If you are evaluating RAG tooling this year, the real question is not whether Bedrock Knowledge Bases can do the job. It can. The question is whether you want AWS to own the retrieval layer, or whether your use case needs enough custom behavior that you would rather keep that control in house.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…