The Best Free LLM APIs of 2026: 30+ Platforms Tested

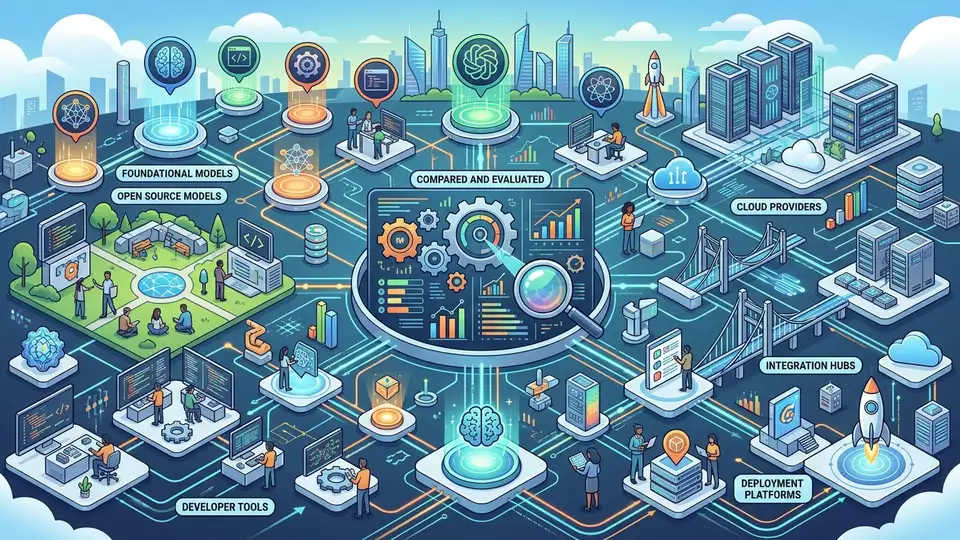

The free LLM API market has matured into a competitive ecosystem with over 30 viable options. From China's Zhipu AI and Kimi to global giants like Google AI Studio, GitHub Models, and speed-focused Groq, developers face genuine choices rather than scarcity. This guide compares quotas, rate limits, model coverage, and real-world use cases.

By 2026, free API access has stopped being a privilege and become a baseline expectation. Whether you're prototyping a side project, evaluating models for production use, or building on a student budget, at least a dozen platforms will accept your request without a credit card. The challenge now isn't finding free access — it's choosing wisely among too many options.

China's Fragmented but Feature-Rich Ecosystem

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Zhipu AI stands out with a rare commitment: GLM-4-Flash is permanently free, not limited-time. New users get 20 million tokens in one allocation, enough for thousands of real-world API calls. The platform supports 30 concurrent requests per second, reasonable for POC (proof-of-concept) work. What matters more is the clarity: no surprise sunsetting, no artificial restrictions designed to upsell you later.

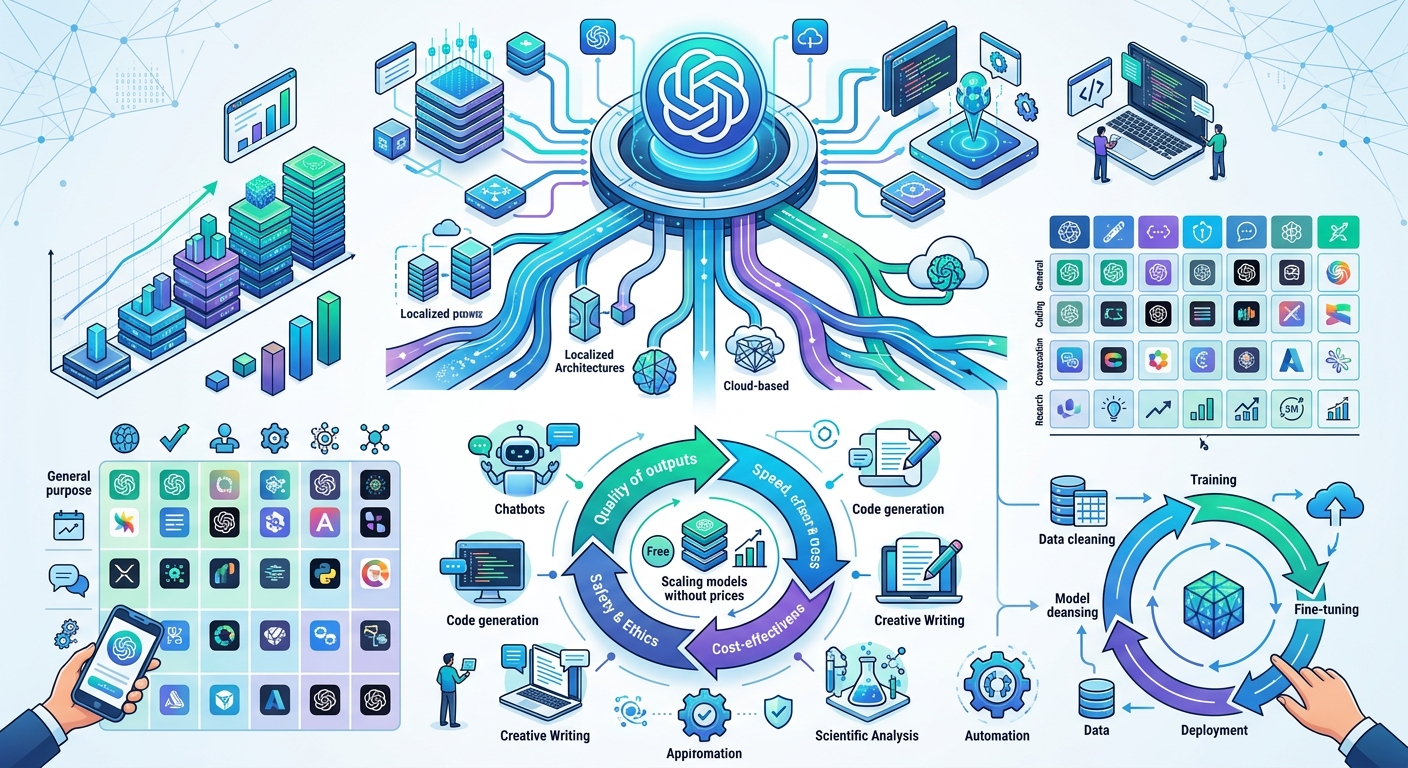

Kimi (Moon Darkside) chose a different edge: 256K token context window. This matters if you process long documents, entire codebases, or research papers in a single request. Rate limits are modest — 3 requests per minute — but token budgets are unmetered. For batch processing or one-shot analysis tasks, this is superior to platforms with tighter quota management.

SiliconFlow aggregates open-source models (DeepSeek, Qwen) under unified API management. 1000 RPM per model is respectable for staging environments. If you want to benchmark multiple open models without maintaining separate SDK integrations, consolidation here saves engineering time.

ByteDance's Doubao, Alibaba's Qwen, Baidu's Ernie, Tencent's HunYuan, and iFLYTEK's Spark all offer free tiers, but access typically requires active application or promotional campaigns. They function as user acquisition channels rather than unlimited free services.

International Platforms: Mature Infrastructure, Generous Quotas

Google AI Studio's quotas are nearly shocking by 2025 standards. Gemini 2.5 Flash allows 30 requests per minute, 1440 per day — enough for a small production application. The multi-modal capability (images, audio, video) matters too: you get text-to-image and document understanding without separate services. Google's compute capacity means uptime reliability isn't theoretical.

GitHub Models removes friction through authentication convenience alone. If you already live in GitHub, you skip onboarding entirely. GPT-4o and 4-turbo are available in trial form (15 req/min, 150 req/day). The limit is tight, but the ease-of-access matters for rapid prototyping.

Groq optimizes for a different metric: latency. LPU (Language Processing Unit) hardware acceleration produces output 5-10x faster than standard GPU inference. 1000 daily requests free, suitable for interactive applications where response speed is a feature. Streaming responses confirm the speed advantage in real time.

Cloudflare Workers AI leverages global CDN presence. 10,000 Neurons per day free, with inference executed at edge nodes near your users. Sub-100ms latency becomes achievable for geographically distributed workloads. OpenRouter unifies fragmented supply. Access Mistral, Cerebras, Meta's Llama, and others through one API contract. China-accessible without proxies.

Third-Party Proxies and Risk Acceptance

Services like ChatAnywhere and API520 promise unified interfaces and geo-flexibility. The trade-off: an extra network hop, credential exposure to a third party, and policy risk if upstream relationships change. Staging and experimentation? Fine. Production? Avoid.

Decision Framework

Choose based on workload shape, not just headline quotas. Learning and exploration: Google AI Studio or GitHub Models. Production-adjacent with multi-model support: OpenRouter. Super-long context: Kimi. Speed-critical: Groq. Multi-modal needs: Gemini.

Handle rate limits gracefully — degradation is inevitable. Assume free policies will tighten. Keep production APIs on paid accounts. Diversify across 2-3 platforms to avoid single-point-of-failure.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…