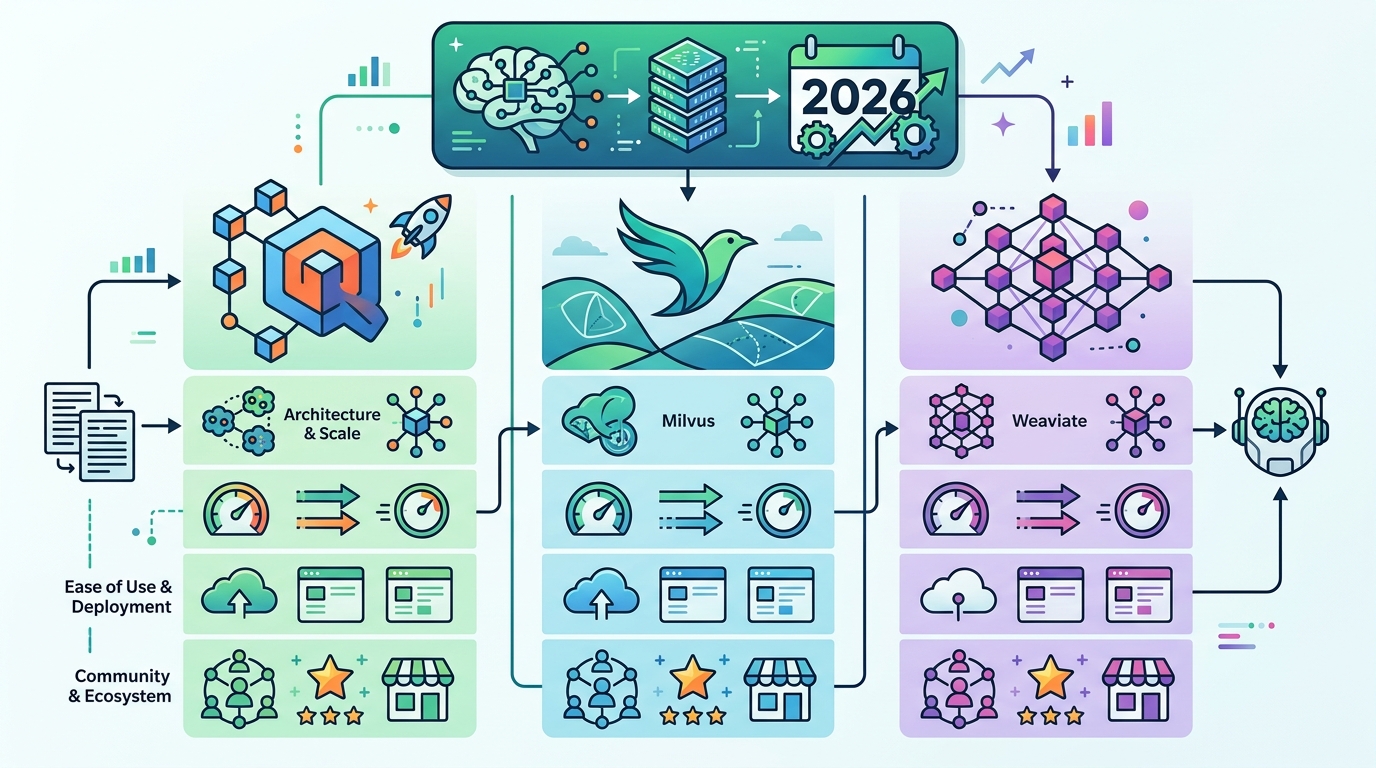

Qdrant vs Milvus vs Weaviate for RAG in 2026

Qdrant, Milvus, and Weaviate power different RAG needs in 2026. Here’s how they compare on latency, scale, hybrid search, and cost.

In 2026, the vector database choice for RAG is less about brand loyalty and more about hard tradeoffs. Qdrant reports sub-100ms latency on 100 million vectors, Milvus targets billion-scale deployments with 100,000+ QPS in benchmark claims, and Weaviate pushes hybrid search with BM25 plus vector ranking in one query path.

If you are building a retrieval layer for an AI app, those numbers matter more than marketing. They affect whether your chatbot feels instant, whether your recommendation engine keeps up under load, and whether your team spends the next quarter tuning indexes instead of shipping product.

What each database is optimized for

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The three databases solve the same broad problem, but they approach it from different directions. Qdrant leans into low-latency search and metadata filtering, Milvus focuses on distributed scale and GPU acceleration, and Weaviate combines vector search with keyword search and model integration.

That split matters because RAG systems rarely fail on embeddings alone. They fail when retrieval becomes too slow, when filters are awkward, or when the database cannot keep up with growth. The best tool is the one that matches your bottleneck.

Qdrant is written in Rust, which gives it a strong memory-efficiency story and a reputation for predictable latency. Milvus is built for clusters, which makes it attractive when your dataset is too large for a single machine to carry the load. Weaviate is the friendliest of the three for teams that want hybrid retrieval without stitching together multiple services.

- Qdrant: Rust-based, strong payload filtering, fast single-node performance

- Milvus: distributed architecture, GPU options, billion-vector scale

- Weaviate: hybrid search, GraphQL API, ML-friendly integrations

Architecture is where the differences become real

Architecture decides how painful the database feels after the proof-of-concept phase. Qdrant keeps the stack relatively focused, with an emphasis on vector search plus rich filtering. That makes it appealing for teams that want fast retrieval over a moderate to large corpus without turning the infrastructure into a science project.

Milvus takes the opposite route. It is built around horizontal scaling, multiple index choices, and enterprise deployment patterns. If your product needs to search across billions of vectors, or if you want to spread load across nodes and GPUs, Milvus is designed for that kind of pressure.

Weaviate sits in the middle, but its real edge is how it blends search modes. Its hybrid approach lets you mix vector similarity with lexical ranking, which is useful when users search with exact terms, partial phrases, and semantic intent in the same request.

“The future is already here — it’s just not evenly distributed.” — William Gibson

That quote fits vector databases better than most people expect. The technology is mature enough to run production systems now, but the best fit still depends on how evenly your workload is distributed across latency, scale, and retrieval quality.

One practical takeaway: if your RAG app needs tight metadata filters, Qdrant is usually the first place to look. If your roadmap includes cluster growth and very high throughput, Milvus deserves the heavier evaluation. If search relevance depends on both text matching and semantic retrieval, Weaviate makes the most sense.

Benchmarks tell a useful story, if you read them carefully

The benchmark numbers in the 2026 comparison are not identical in scope, so you should avoid treating them like a single league table. Still, they show a clear pattern. Qdrant is tuned for fast, filtered retrieval at large but manageable scale. Milvus is built for very large datasets and high query volume. Weaviate trades some raw speed for richer search behavior.

Here is the most useful way to read the numbers: Qdrant v1.14 reportedly hits under 100ms at 95% recall on 100 million vectors. Milvus v2.4.0 is cited at over 100,000 QPS with under 200ms latency at 90% recall on 1 billion vectors. Weaviate v1.23.0 is shown at 150ms latency with 92% recall on 500 million vectors.

- Qdrant v1.14: 100M vectors, 95% recall, under 100ms latency

- Milvus v2.4.0: 1B vectors, 90% recall, 100,000+ QPS, under 200ms latency

- Weaviate v1.23.0: 500M vectors, 92% recall, 150ms latency

The hardware story matters too. Qdrant is described with 4–8 CPU cores, 8–16 GB RAM, and SSD storage. Milvus wants 16–32 cores, 32–64 GB RAM, NVMe storage, and optional GPUs. Weaviate lands between those two with 8–16 cores, 16–32 GB RAM, and SSD storage.

Those resource profiles tell you something the marketing pages often hide: Milvus is the most infrastructure-hungry option, but it also gives the biggest ceiling. Qdrant is the easiest to keep efficient. Weaviate asks for more than Qdrant, less than Milvus, and pays you back with search flexibility.

Feature choices decide the developer experience

Feature sets are where teams usually make their final call. Qdrant’s payload filtering is a big deal if you need to constrain retrieval by tenant, document type, timestamp, or access control. Its Universal Query API also makes multi-stage retrieval patterns easier to express.

Milvus brings a wider menu of index types, including IVF, HNSW, and SCANN. That flexibility helps when you need to tune for recall, speed, or memory use. It also fits distributed deployments better than many smaller vector stores, especially when the workload keeps growing.

Weaviate’s appeal is different. It integrates well with tools like Hugging Face and supports multimodal embeddings, which makes it useful for teams building semantic search across text, images, or audio. Its GraphQL API also gives developers a single query surface for structured and unstructured data.

- Qdrant: strong metadata filtering, quantization options, low-latency retrieval

- Milvus: IVF, HNSW, SCANN, distributed scaling, GPU support

- Weaviate: GraphQL, hybrid ranking, multimodal and ML integrations

There is also a cost angle. Qdrant often wins when you care about efficiency per node. Milvus can be expensive to run, but that cost buys scale and throughput. Weaviate is attractive when the engineering team wants fewer moving parts around search and embedding workflows.

If your team is already using Kubernetes, Milvus documentation will feel more aligned with cluster operations. If you want a smaller operational footprint and strong filtering, Qdrant is easier to reason about. If product search needs a blend of semantic and keyword ranking, Weaviate is the most opinionated fit.

Which one should you pick for RAG?

For a RAG system, the right answer depends on the retrieval pattern, not the model brand. If your app serves live chat, internal search, or recommendation results with strict filters, Qdrant is usually the cleanest choice. If you are building a large-scale retrieval layer for enterprise search or personalization across huge corpora, Milvus makes more sense. If your users expect keyword precision and semantic recall in the same interface, Weaviate is the strongest match.

My read is simple: Qdrant is the best default for teams that want speed and control, Milvus is the best bet when scale becomes the main problem, and Weaviate is the best fit when search quality depends on mixing lexical and semantic signals. That is a practical way to think about the choice, and it will save you from overbuying infrastructure you do not need.

If you are still deciding, start with a small benchmark using your own data. Measure query latency, recall, filter complexity, and operational overhead. Those four numbers will tell you more than any vendor comparison page ever will.

For teams already iterating on retrieval pipelines, the next move is clear: test one database with your real embeddings and real metadata, then compare it against your current stack under load. The winner is the one that keeps latency predictable when your corpus doubles and your users stop forgiving slow search.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…