Stop Sending IDE-Catchable AI Code Errors to Review

AI-generated code should be screened in the IDE before it reaches human review.

AI-generated code should be screened in the IDE before it reaches human review.

Stop sending IDE-catchable AI code errors to review. The reason is simple: code review is a scarce human judgment channel, and AI has increased the amount of code that competes for it. JetBrains cites its own 2025 developer survey of more than 24,000 developers showing that AI use is mostly ad hoc, not governed by a consistent policy. It also points to studies suggesting that only about 20% to 25% of AI code hallucinations are detectable with automated structural and static analysis, which means a meaningful share of the avoidable mistakes can be removed before a pull request ever exists. That is the point. If the error is catchable in the editor, shipping it to review is not rigor, it is waste.

Review is the bottleneck, not AI output

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

More AI output means more review load, and the data backs that up. DX’s Q4 2025 data on 51,000 developers found that daily AI users merge 60% more pull requests per week than light users. A separate 2025 randomized controlled trial across three enterprise companies found that developers with AI assistants completed 26% more tasks per week than the control group. These are productivity gains, but they do not disappear into the ether. They land on reviewers’ desks as more diffs, more comments, and more decisions.

That matters because review quality is not infinite. Long before generative AI, researchers showed that review rate was a statistically significant factor in defect removal effectiveness, even when controlling for developer ability. More time spent per line reviewed consistently correlated with more defects found. The conclusion is uncomfortable but clear: if you flood review with avoidable structural mistakes, you reduce the value of the review process itself. The reviewer is not the right place to catch what a machine can already recognize.

AI changes the kind of code that arrives

AI code does not just arrive in larger volumes. It arrives with a different defect profile. A 2025 analysis of more than 500,000 code samples found that AI-generated code contains more unused constructs, hardcoded values, and higher-risk security vulnerabilities than human-written code. Another 2025 study identified defect categories that have no real equivalent in human-written code. That means human review is now being asked to spot patterns that are both more frequent and more alien to normal codebases.

The review problem gets worse because plausibility lowers suspicion. A 2026 study found that AI-generated pull requests with nearly twice the code redundancy drew fewer negative reactions from reviewers than human-written ones. In other words, the code looked busy, polished, and familiar enough to pass through with less friction. That is exactly why IDE-level structural analysis belongs upstream. It does not get impressed by surface fluency. It checks whether the code actually fits the project, the language rules, and the surrounding structure.

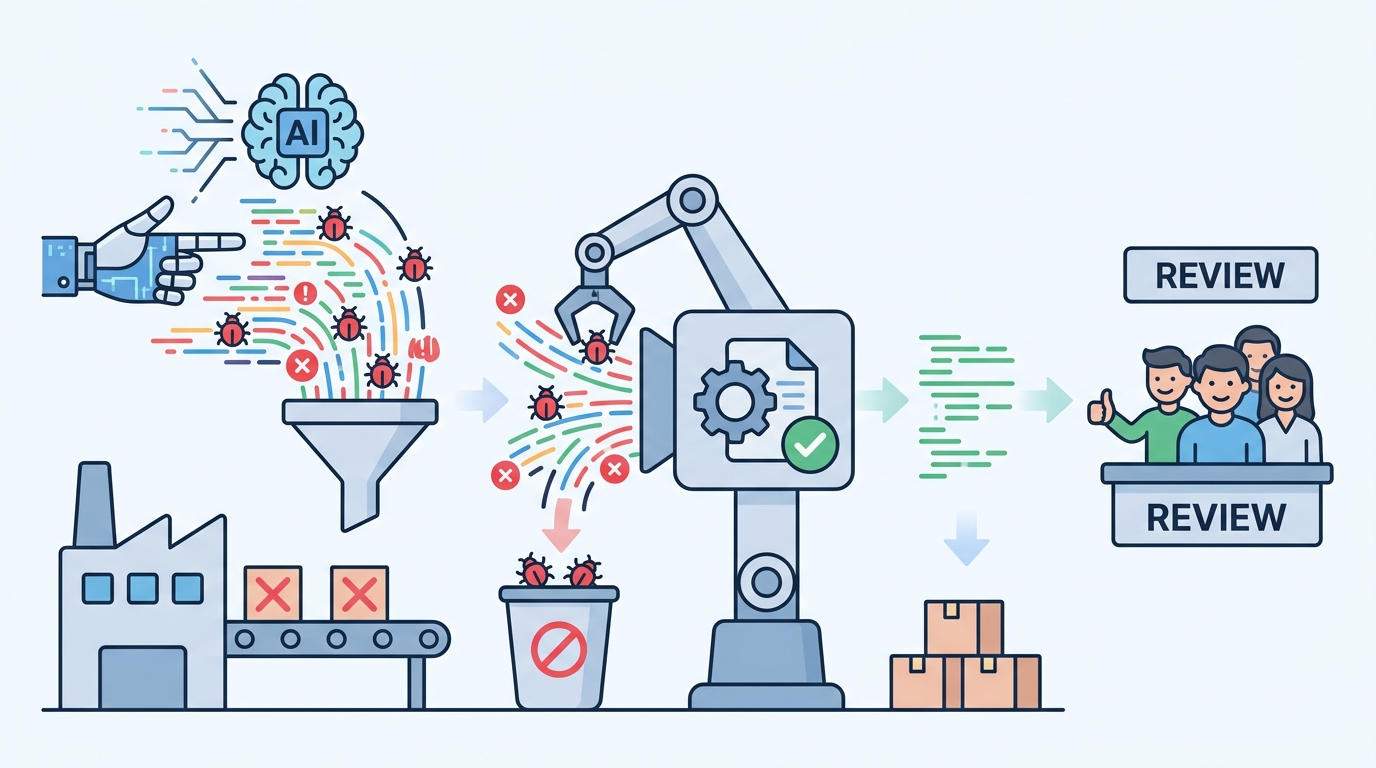

The pipeline should catch what the editor can already see

The strongest argument for moving checks earlier is that organizations already know how to do it when scale forces the issue. Google, while running LLM-powered code migrations, found that reviewers had to revert AI-generated changes often enough that it invested in automated verification to reduce the burden. Uber, handling tens of thousands of code changes weekly, built an automated review system that runs before human reviewers engage. These are not philosophical gestures. They are operational responses to a predictable failure mode.

JetBrains is right to frame this as an environment problem, not just a policy problem. Developers use an average of 3.6 development environments, according to the 2025 Stack Overflow Developer Survey. That means teams cannot rely on everyone having the same safeguards, the same plugin stack, or the same quality of structural analysis. If one developer’s setup catches AI-generated misuse and another’s does not, your review process inherits the weakest configuration in the group. Enforcement belongs where the code first takes shape, and for many teams that is the IDE, not the pull request.

The counter-argument

The best objection is that review should stay broad because no automated system catches everything. That is true. JetBrains itself cites studies suggesting that roughly 44% of AI hallucination errors are not reliably surfaced by automated checks. Teams also need human reviewers for semantics, architecture, product intent, and tradeoffs that no static analyzer can infer. If review becomes too automated, organizations risk mistaking syntactic cleanliness for software quality.

That objection is real, but it does not defend sending obvious structural mistakes to humans. It only proves that review should focus on what humans are uniquely good at. The limit is clear: automation will never replace judgment. But that is exactly why it should remove the repetitive, catchable defects first. If a tool can identify unused constructs, hardcoded values, dependency mismatches, or code that violates the project’s structure, leaving that work for humans is a misallocation of scarce attention.

What to do with this

If you are an engineer, make the editor the first gate and the review the second. If you are a PM or founder, stop measuring AI adoption by output volume alone and start measuring how much of that output is structurally validated before a pull request is created. Standardize deep codebase-aware checks across the team’s IDEs, then back them with CI enforcement for the same classes of errors. Human review should be reserved for design, intent, and risk. Everything else that a machine can reliably catch belongs upstream.

// Related Articles

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…

- [TOOLS]

Why IBM’s Bob is the right kind of AI coding assistant