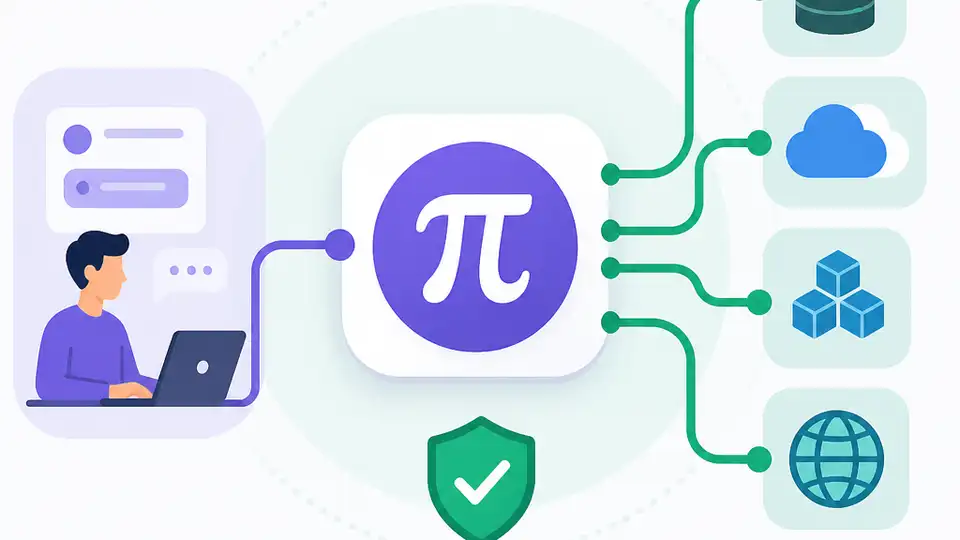

Why Pi MCP Adapter Is the Right Way to Use MCP

Pi MCP Adapter is the right way to use MCP because it cuts token waste without giving up useful tools.

Pi MCP Adapter cuts MCP token waste while keeping useful tools and live UI updates.

Pi MCP Adapter is the right answer to MCP bloat because it preserves the ecosystem’s utility while collapsing the context cost to a single proxy tool.

GitHub’s own repository description makes the case in plain terms: a single MCP server can burn 10k+ tokens in tool definitions before the agent does any work. That is not a theoretical annoyance; it is a direct tax on every conversation. Pi’s adapter changes the economics by exposing one proxy tool of roughly 200 tokens, then loading tools on demand. That is the difference between starting a session with half your context window gone and starting with room to think.

The first reason: MCP’s default shape is wasteful

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Most criticism of MCP misses the real problem. The protocol is not broken, but the common implementation pattern is bloated. If an agent has to ingest a large tool catalog up front, the model pays for every option whether it uses them or not. Pi MCP Adapter attacks that overhead by making discovery lazy. The agent can search metadata, then call only the tool it needs. That is a practical improvement, not a cosmetic one.

The repository’s own examples show the point clearly: instead of stuffing dozens of tool definitions into the prompt, the agent can ask for a screenshot tool by name after a search, then invoke it with a compact JSON string. Two calls replace 26 tool definitions cluttering the context. That matters because context is not free. Every extra tool schema competes with the user’s actual task, and Pi’s design keeps the budget focused on work rather than inventory.

The second reason: live sessions beat one-shot tool calls

Pi MCP Adapter is not just a compression layer. It also supports conversational UIs that stay alive across turns, which is the real leap in usefulness. The adapter retrieves messages with mcp({ action: "ui-messages" }) and responds in a shared session. When the agent calls the same tool again while the UI is already open, the adapter pushes the new result into the existing window instead of replacing it. That means the user sees a living artifact, not a series of disposable outputs.

This is exactly the kind of interaction agents need if they are going to do more than autocomplete text. A chart can be refined in place. A browser task can keep its state. A user can answer a prompt without losing the interface. The repository frames this as live updates, and that is the correct framing. Agents become more useful when they can collaborate through a persistent surface, not just emit isolated tool results into a chat log.

The counter-argument

The strongest argument against Pi’s approach is that it adds another layer between the agent and the tools, and layers can hide complexity instead of removing it. Mario’s critique, which the repository cites, is not silly: if a workflow can be handled by a few simple CLI tools, why keep MCP in the stack at all? For teams with a narrow, stable automation surface, direct scripts are easier to reason about and easier to secure. They avoid the indirection of proxies, metadata caches, and adapter-specific config files.

That critique is valid in one narrow case: when the tool set is tiny and the workflow is static, MCP is unnecessary ceremony. But that is not the world most agent builders operate in. The moment you need browsers, databases, APIs, and UI surfaces together, the “just write CLI tools” answer stops scaling. Pi’s adapter accepts that reality and makes the tradeoff explicit: keep the MCP ecosystem, remove the prompt tax. That is the better engineering choice for multi-tool agents.

What to do with this

If you are an engineer or founder building an agent product, stop treating tool exposure as a binary choice between “everything in context” and “no MCP at all.” Use a proxy-first adapter pattern for broad tool ecosystems, reserve direct tools for the few commands that deserve first-class visibility, and make lazy loading the default. If your product includes interactive UI, adopt session reuse and in-place updates immediately. The right metric is not how many tools you can advertise, but how much of the context window you leave for the actual task.

// Related Articles

- [TOOLS]

OpenCode CLI adds ACP server support

- [TOOLS]

Microsoft open-sources 174 AI coding skills

- [TOOLS]

AWS ships Agent Toolkit for coding agents

- [TOOLS]

Why 32-Agent Paper Teams Are Better at Research, Not Writing

- [TOOLS]

How to Use Mistral OCR with Python

- [TOOLS]

How to Build Rust GPU Kernels with cuda-oxide